Transformer Sequence-to-Sequence Approach

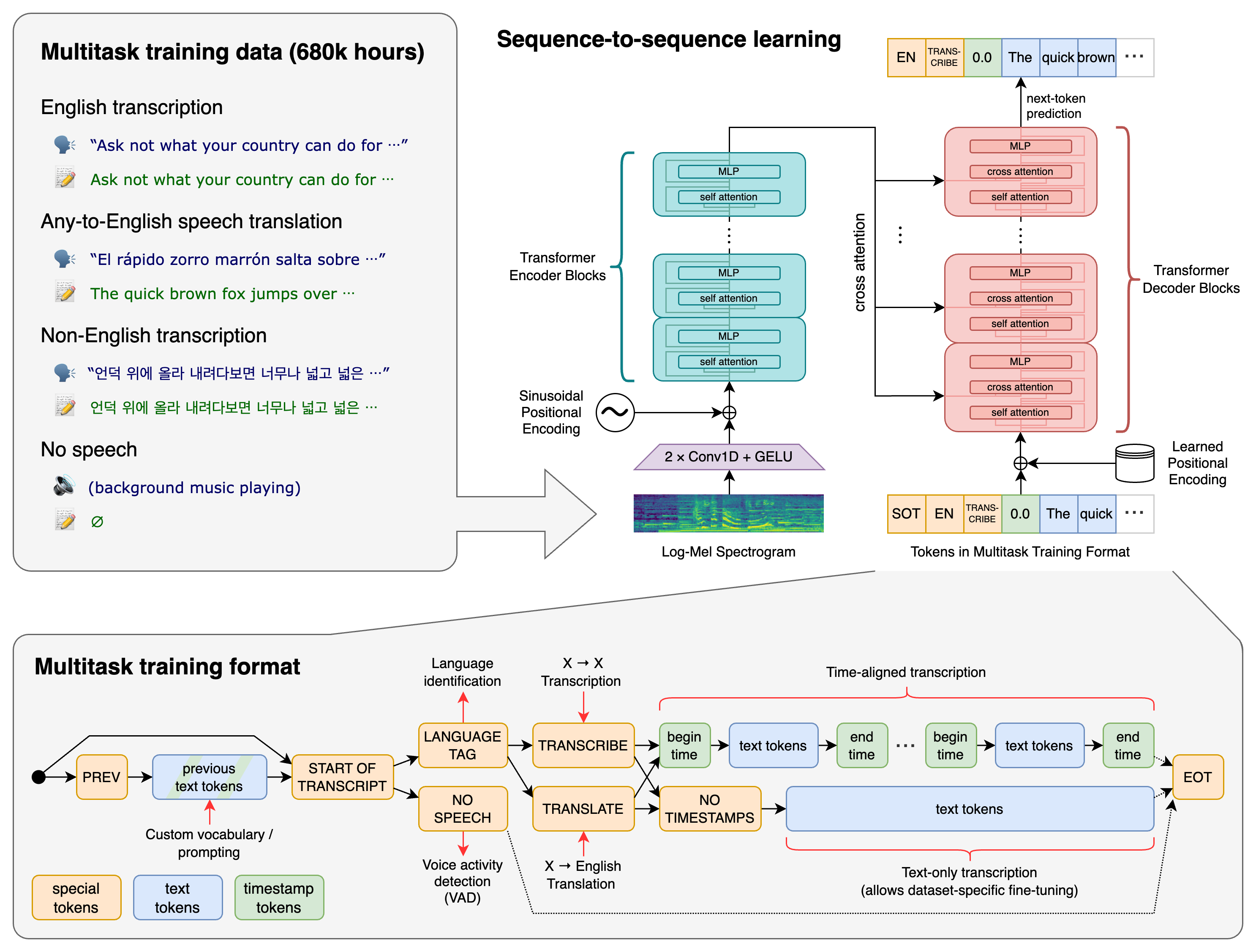

Whisper uses a Transformer sequence-to-sequence model trained on various speech processing tasks. This architecture allows a single model to replace many stages of a traditional speech-processing pipeline.

Whisper uses a Transformer sequence-to-sequence model trained on various speech processing tasks. This architecture allows a single model to replace many stages of a traditional speech-processing pipeline.

Unified Task Representation

All tasks are jointly represented as a sequence of tokens to be predicted by the decoder, including:- Multilingual speech recognition

- Speech translation

- Spoken language identification

- Voice activity detection

The multitask training format uses a set of special tokens that serve as task specifiers or classification targets, enabling seamless switching between different speech processing tasks.

Multitask Training

Whisper’s training approach combines multiple speech processing tasks into a single model:Speech Recognition

Transcribe audio to text in the same language

Translation

Translate foreign language speech to English

Language Detection

Identify the spoken language in audio

Voice Activity

Detect when speech is present in audio

How It Works

The transcribe() method processes audio using:- 30-second sliding window: Audio is processed in overlapping segments

- Autoregressive predictions: The model generates text token by token

- Special tokens: Task-specific tokens guide the model’s behavior

Key Features

Multiple Model Sizes

Multiple Model Sizes

Six model sizes available, from tiny (39M parameters) to large (1550M parameters), offering different speed and accuracy tradeoffs.

99 Languages Supported

99 Languages Supported

Whisper supports transcription and translation for 99 languages with varying performance levels.

English-Only Models

English-Only Models

Specialized English-only variants (

.en models) for better performance on English audio.Optimized Turbo Model

Optimized Turbo Model

The turbo model offers 8x faster transcription with minimal accuracy loss compared to large-v3.

The turbo model is not trained for translation tasks. Use the multilingual models (tiny, base, small, medium, large) for translation.