Make sure you’ve completed the installation before starting this guide.

List available domains

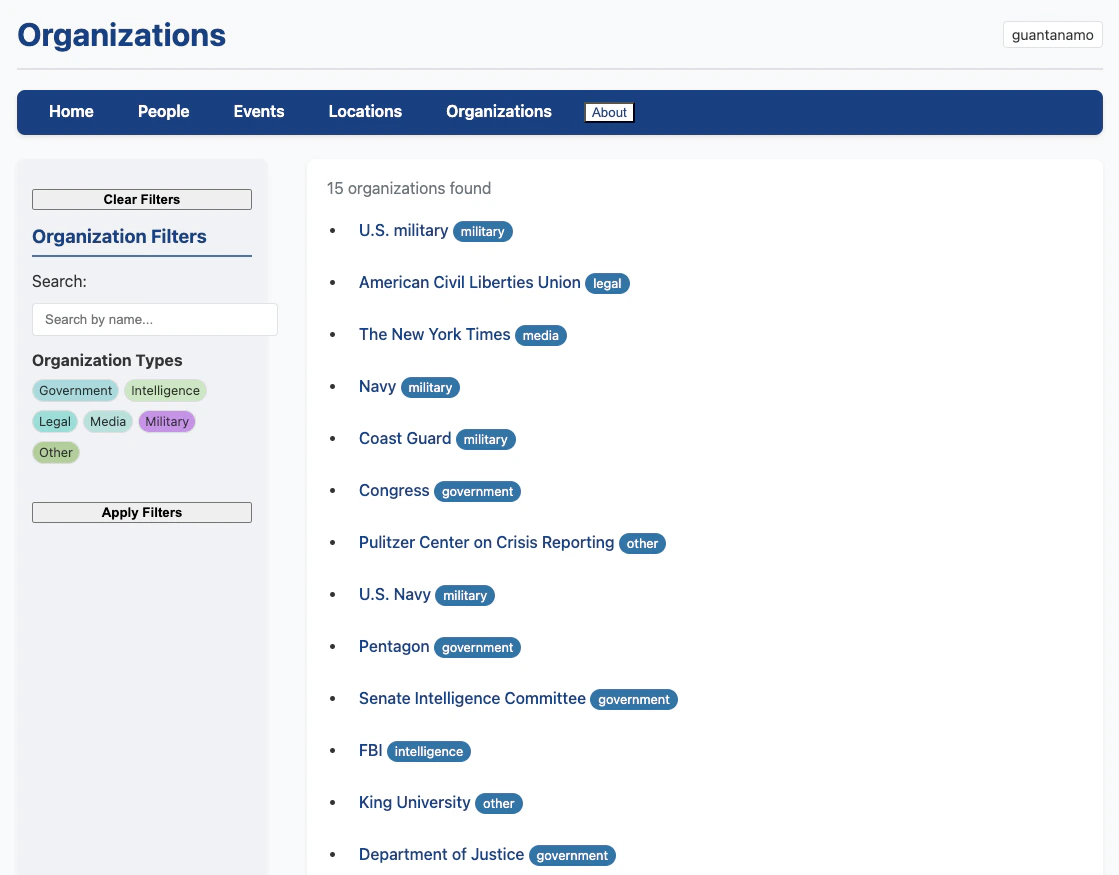

First, check what research domains are already configured:guantanamo domain that ships with Hinbox, plus the template domain.

Create a new research domain

Let’s create a domain for researching the history of food in Palestine:configs/palestine_food_history/ with these files:

config.yaml- Research domain settings and data pathsprompts/*.md- Extraction instructions for each entity typecategories/*.yaml- Entity type definitions

Edit the domain configuration

Open

configs/palestine_food_history/config.yaml and update the domain settings:Customize entity types

Edit Similarly, customize

configs/palestine_food_history/categories/people.yaml to define relevant person types:organizations.yaml, locations.yaml, and events.yaml for your domain.Prepare your data

Hinbox expects historical sources in Parquet format with these columns:| Column | Description |

|---|---|

title | Document or article title |

content | Full text content |

url | Source URL (if applicable) |

published_date | Publication or creation date |

source_type | Type: “book_chapter”, “journal_article”, “news_article”, “archival_document” |

Process your sources

Now you’re ready to process historical sources and extract entities:- Processes the first 5 articles

- Checks relevance to your domain

- Extracts entities using AI models

- Applies quality controls and retries if needed

- Deduplicates entities using smart matching

- Saves results to

data/palestine_food_history/entities/

Start with

--limit 5 to test your configuration. Once you’re satisfied with the results, remove the limit to process all sources.Processing options

Hinbox provides several options to control processing:Explore your results

Launch the web interface to browse extracted entities:- Dashboard - Overview of all entities by type

- Entity listings - Browse people, organizations, locations, and events

- Entity profiles - Detailed profiles with sources, aliases, and version history

- Search and filtering - Find specific entities quickly

- Confidence badges - Visual indicators of extraction quality

Understanding the output

Entity files

Results are saved as Parquet files in your output directory:id- Unique entity identifiercanonical_name- Best display name selected by 5-layer scoringaliases- Alternative names found in sourcestype- Entity type (e.g., “farmer”, “trader”)description- Extracted descriptionsource_articles- List of articles mentioning this entityconfidence_score- Quality metricversion- Profile version number

Processing status

Check which articles have been processed:- Total articles in your dataset

- Articles processed successfully

- Articles pending processing

- Articles that failed

Cache directory

Extraction results are cached to avoid redundant LLM calls:cache.extraction.version in config.yaml to invalidate the cache.

Next steps

Configuration guide

Learn advanced configuration options for your domain

Processing pipeline

Understand how extraction, merging, and QC work

Web interface

Explore the FastHTML frontend features

API reference

Technical details of the processing engine

Tips for better results

1. Write specific prompts

Generic prompts produce generic results. Make your extraction prompts specific to your historical period and sources:2. Define narrow entity types

Specific entity types improve extraction quality:3. Use relevance filtering

Enable relevance checking to skip irrelevant sources:prompts/relevance.md to define what makes a source relevant to your research.

4. Adjust similarity thresholds

Fine-tune deduplication for each entity type inconfig.yaml:

5. Monitor quality

Use verbose mode to see extraction quality issues:- QC warnings about missing fields

- Automatic retry attempts

- Merge dispute agent decisions

- Cache hit rates