What is Memori BYODB?

Memori is a memory layer for LLM applications, agents, and copilots. It continuously captures interactions, extracts structured knowledge, and intelligently ranks, decays, and retrieves the relevant memories. So your AI remembers the right things at the right time across every session.Want a zero-setup option? Try Memori Cloud at app.memorilabs.ai.

Why Memori BYODB?

Database Freedom

Use SQLite, PostgreSQL, MySQL, MariaDB, Oracle, MongoDB, CockroachDB, or OceanBase. Managed providers like Neon, Supabase, and AWS RDS/Aurora are also supported through their compatible engines.Full Data Ownership

Your data stays in your database, on your infrastructure. Full compliance and regulatory control with no third-party storage.LLM Provider Support

OpenAI, Anthropic, Gemini, and Grok (xAI) via direct SDK wrappers. Bedrock is supported via LangChainChatBedrock. OpenAI-compatible providers (Nebius, Deepseek, NVIDIA NIM, Azure OpenAI, and more) work through OpenAI’s base_url parameter.

Supports sync, async, streamed, and unstreamed modes, plus LangChain, Agno, and Pydantic AI.

Semantic Recall

Semantic search surfaces the right memories at the right time. Memories are ranked by relevance and importance, with intelligent decay so older or less relevant facts recede — so your AI stays contextually aware without clutter. Recall any memory later with semantic search; use manual recall for custom prompts, UIs, or debugging.Quick Example

Get started with a database connection and your favorite LLM:Key Features

One-Line Setup

Connect your DB and LLM; memory capture, augmentation, and recall work without extra config.Semantic Recall

Queries like “what does this user prefer?” pull the right memories automatically.Dashboard Access

Use app.memorilabs.ai for API keys, usage tracking, and the optional Graph Explorer and Playground (requires Memori Cloud).Your Data, Your Rules

Store everything in your DB; compliance, backups, and custom analytics stay under your control.OpenAI-Compatible Providers

Memori supports any model that uses OpenAI’s client interface via thebase_url parameter — including Nebius, Deepseek, NVIDIA NIM, and more.

Core Concepts

| Concept | Description | Example |

|---|---|---|

| Entity | Person, place, or thing (like a user) | entity_id="user_123" |

| Process | Your agent, LLM interaction, or program | process_id="support_agent" |

| Session | Groups LLM interactions together | Auto-generated UUID, manually manageable |

| Augmentation | Background AI enhancement of memories | Auto-runs after wrapped LLM calls |

| Recall | Retrieve relevant memories from previous interactions | Auto-injects recalled memories |

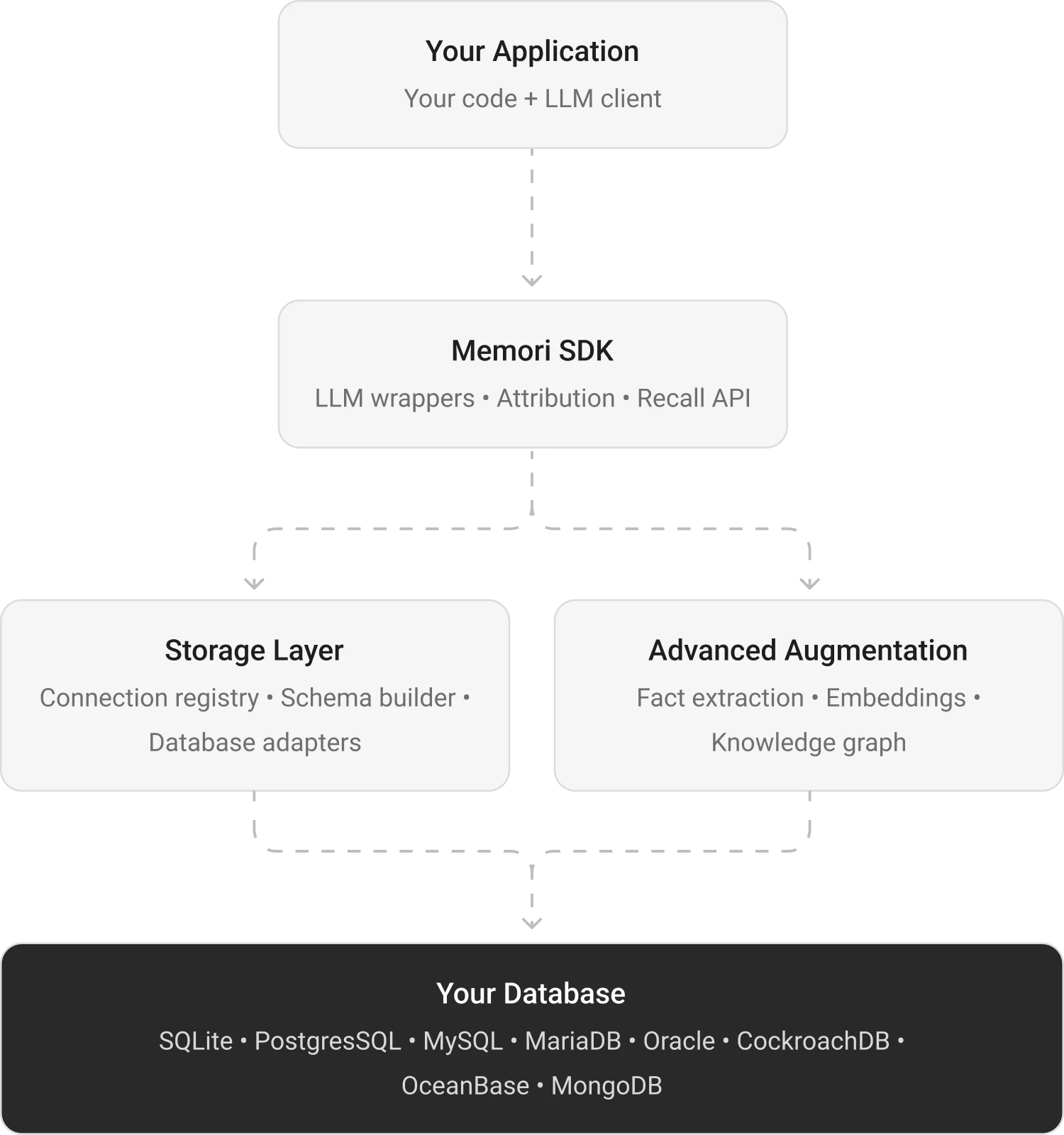

Architecture Overview

The diagram shows three lanes: your app, the Memori SDK, and your own database. Your app calls the LLM normally, Memori intercepts the call, and the synchronous response path continues with zero added latency. Synchronous capture: Conversation messages are stored inmemori_conversation_message while your normal LLM flow continues.

Recall injection: Relevant memories are pulled from memori_entity_fact and injected into later prompts.

Async augmentation: Background processing extracts facts, preferences, rules, events, and relationships from conversations.

Own-your-data storage: Structured memory records are written to your database, including memori_entity_fact, memori_process_attribute, and memori_knowledge_graph.

Database Tables

When you runmem.config.storage.build(), Memori creates these tables in your database:

memori_conversation_message- Raw conversation messagesmemori_entity_fact- Extracted facts about entitiesmemori_process_attribute- Process-specific attributesmemori_knowledge_graph- Relationship graph datamemori_session- Session tracking

Next Steps

Install Memori

Get started by installing the Memori SDK and your database driver.Go to Installation →

Quick Start

Build your first memory-enabled application with SQLite in under 3 minutes.Go to Quick Start →

Choose Your Database

Explore supported databases and connection patterns.Go to Databases →