Knowledge Graph

Memori automatically builds a knowledge graph from your AI conversations. Each time Advanced Augmentation processes a conversation, it extracts structured relationships — semantic triples — and connects them into a graph. This powers richer recall and gives your AI deeper understanding of each user.How It Works

- Conversation captured — Your user talks to your AI through the Memori-wrapped LLM client

- Augmentation processes — Memori analyzes the conversation in the background

- NER extraction — Named-entity recognition identifies key entities and relationships

- Triple creation — Relationships are expressed as subject-predicate-object triples

- Graph storage — Triples are stored and deduplicated in the knowledge graph

- Recall ready — The graph is available for semantic search on subsequent LLM calls

Semantic Triples

Every fact in the knowledge graph is a semantic triple — a three-part statement: [Subject] [Predicate] [Object].- “Alice” “prefers” “dark mode”

- “PostgreSQL” “is” “a relational database”

- “The project” “uses” “FastAPI”

Example Extraction

From “My favorite database is PostgreSQL and I use it with FastAPI for our REST APIs. I’ve been using Python for about 8 years”:| Subject | Predicate | Object |

|---|---|---|

| user | favorite_database | PostgreSQL |

| user | uses | FastAPI |

| user | uses_for | REST APIs |

| user | uses_with | PostgreSQL + FastAPI |

| user | experience_years | Python (8 years) |

Triple Deduplication

Memori automatically deduplicates triples to avoid storing redundant information: First mention:memori/memory/augmentation/augmentations/memori/_augmentation.py:246-251

Storage Structure

Normalized Tables

Triples are stored in a normalized schema to minimize redundancy:| Table | Purpose |

|---|---|

memori_subject | Stores unique subjects |

memori_predicate | Stores unique predicates |

memori_object | Stores unique objects |

memori_knowledge_graph | Links subjects, predicates, and objects into triples |

memori_entity_fact | Stores facts with vector embeddings for recall |

Knowledge Graph Table Schema

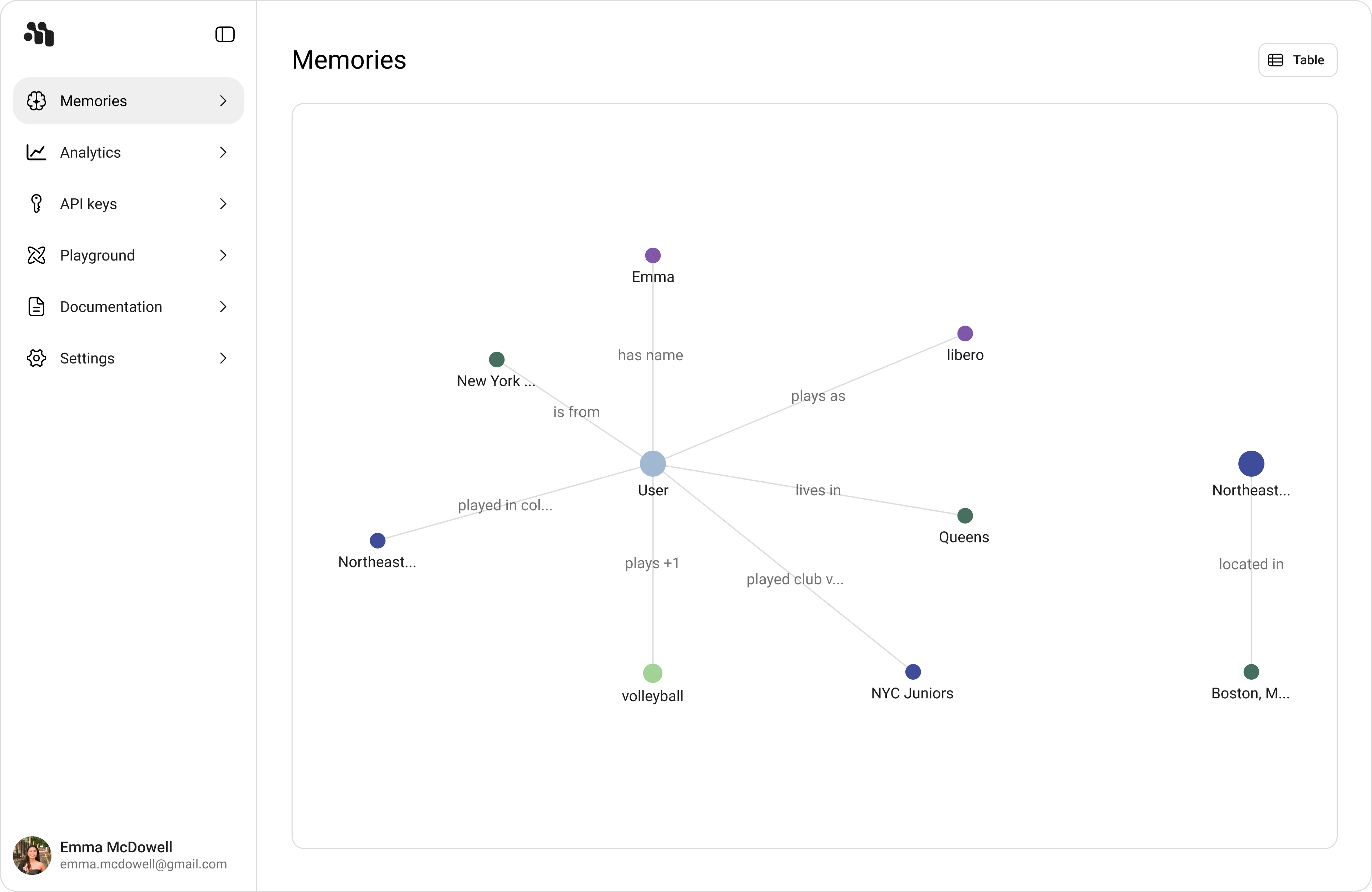

Visualizing the Graph

The Memori Playground at app.memorilabs.ai includes a Memory Graph Viewer that shows:| Element | What it shows |

|---|---|

| Nodes | Subjects and objects from semantic triples |

| Edges | Predicates (relationships) between nodes |

| Mention counts | How often a fact was discussed across sessions |

| Timestamps | When facts were first and last seen |

Scope

The knowledge graph follows the same scoping rules as other memory types:| Aspect | Scope |

|---|---|

| Triples | Per entity — shared across all processes |

| Visibility | All processes for an entity can see and use the graph |

| Growth | Conversations from any process contribute to the entity’s graph |

Querying the Graph

Via Recall API

The knowledge graph is automatically used during recall. When you callmem.recall() or make an LLM call through a wrapped client, Memori searches across both extracted facts and the knowledge graph to find the most relevant context.

Via Direct SQL (BYODB Only)

If you’re using BYODB, you can query the knowledge graph directly:View All Triples

View All Triples

Triples for an Entity

Triples for an Entity

Most Mentioned Relationships

Most Mentioned Relationships

Triple-to-Fact Conversion

Memori automatically converts semantic triples into natural language facts for embedding and recall:_augmentation.py:188-192, 221-236

Hybrid Approach

Memori uses both triples and facts for maximum flexibility:- Triples provide structured, queryable relationships

- Facts enable semantic search via embeddings

- Both are generated from the same extraction process

- Query precision (triples)

- Semantic understanding (facts with embeddings)

Integration with Recall

The knowledge graph enhances recall by providing additional context: Without knowledge graph:- Query: “What programming languages do I know?”

- Recall: Direct fact matches only

- Query: “What programming languages do I know?”

- Recall: Facts + related triples (experience_years, uses_with, projects)

- Result: Richer, more contextual answers

Building Relationships Over Time

As conversations accumulate, the knowledge graph builds deeper relationships: Conversation 1:- What the user uses (Python, FastAPI)

- How long they’ve used it (8 years)

- How they use it together (Python + FastAPI)

Knowledge Graph Schema (ERD)

The knowledge graph is built automatically — you don’t need to extract triples manually. Just have conversations through Memori, and the graph grows organically.

Performance Considerations

Triple Deduplication

Memori uses hash-based lookups to deduplicate triples efficiently:- O(1) lookup for existing triples

- Batched inserts for new triples

- Incremental mention count updates

Indexing

The knowledge graph tables are indexed on:entity_id(for per-entity queries)subject_id,predicate_id,object_id(for relationship queries)mention_count(for ranking)