Overview

Netflix has evolved from a simple DVD rental service to the world’s leading streaming platform, serving over 200 million subscribers across 190+ countries. This case study explores the architectural decisions, technology choices, and evolution that enable Netflix to stream billions of hours of content globally.Architecture Evolution

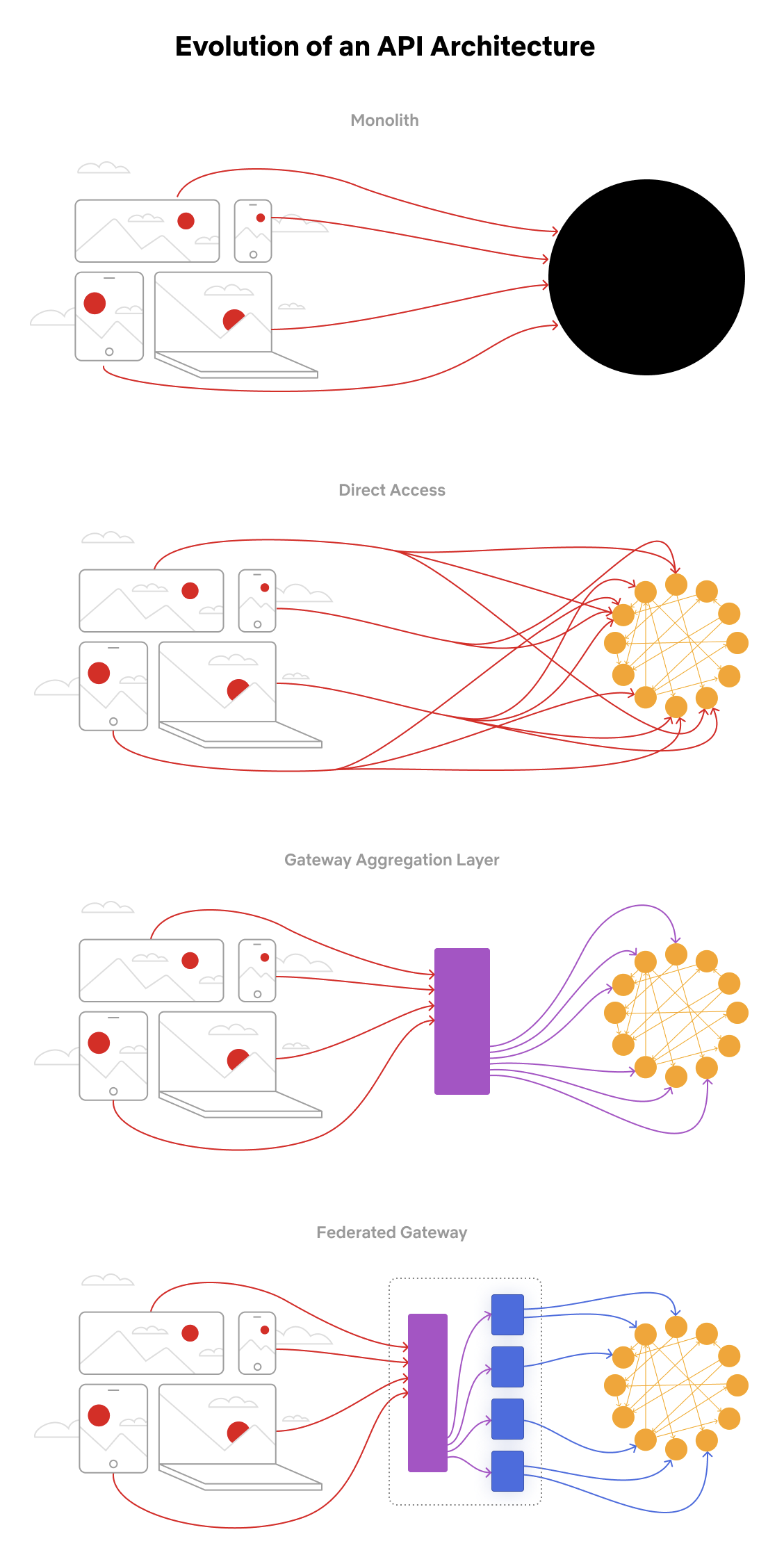

The Four Stages of Netflix API Architecture

Stage 1: Monolith (Early Days)

Stage 1: Monolith (Early Days)

The application was packaged and deployed as a single monolith - a Java WAR file. This approach is typical for most startups as it allows for rapid development and simple deployment.Challenges:

- Difficult to scale individual components

- Deployment risks affected entire application

- Limited team autonomy

Stage 2: Direct Access to Microservices

Stage 2: Direct Access to Microservices

Client applications could make requests directly to individual microservices. As Netflix grew, they decomposed the monolith into hundreds of specialized services.Challenges:

- Exposing hundreds/thousands of microservices to clients was unmanageable

- Network chattiness and latency issues

- Difficult to maintain backward compatibility

Stage 3: Gateway Aggregation Layer

Stage 3: Gateway Aggregation Layer

Many use cases span multiple services. For example, rendering the Netflix homepage requires data from movie, production, and talent APIs. The gateway aggregation layer solved this by:

- Consolidating multiple service calls

- Reducing client complexity

- Providing a unified API interface

- ZUUL - API Gateway

- Eureka - Service Discovery

Stage 4: Federated Gateway (Current)

Stage 4: Federated Gateway (Current)

As the number of developers grew and domain complexity increased, even the aggregation layer became difficult to maintain. Netflix adopted GraphQL Federation which allows:

- A single GraphQL gateway that fetches data from all other APIs

- Teams to own their domain’s schema independently

- Unified data graph across the organization

Technology Stack

Frontend

Mobile Apps

- iOS: Swift

- Android: Kotlin

- Native apps for optimal performance

Web Application

- Framework: React

- API Communication: GraphQL

- Progressive Web App capabilities

Backend Services

Netflix’s backend is built on a microservices architecture with thousands of services:- API Gateway: ZUUL (custom-built)

- Service Discovery: Eureka

- Framework: Spring Boot

- Communication: REST, gRPC, GraphQL

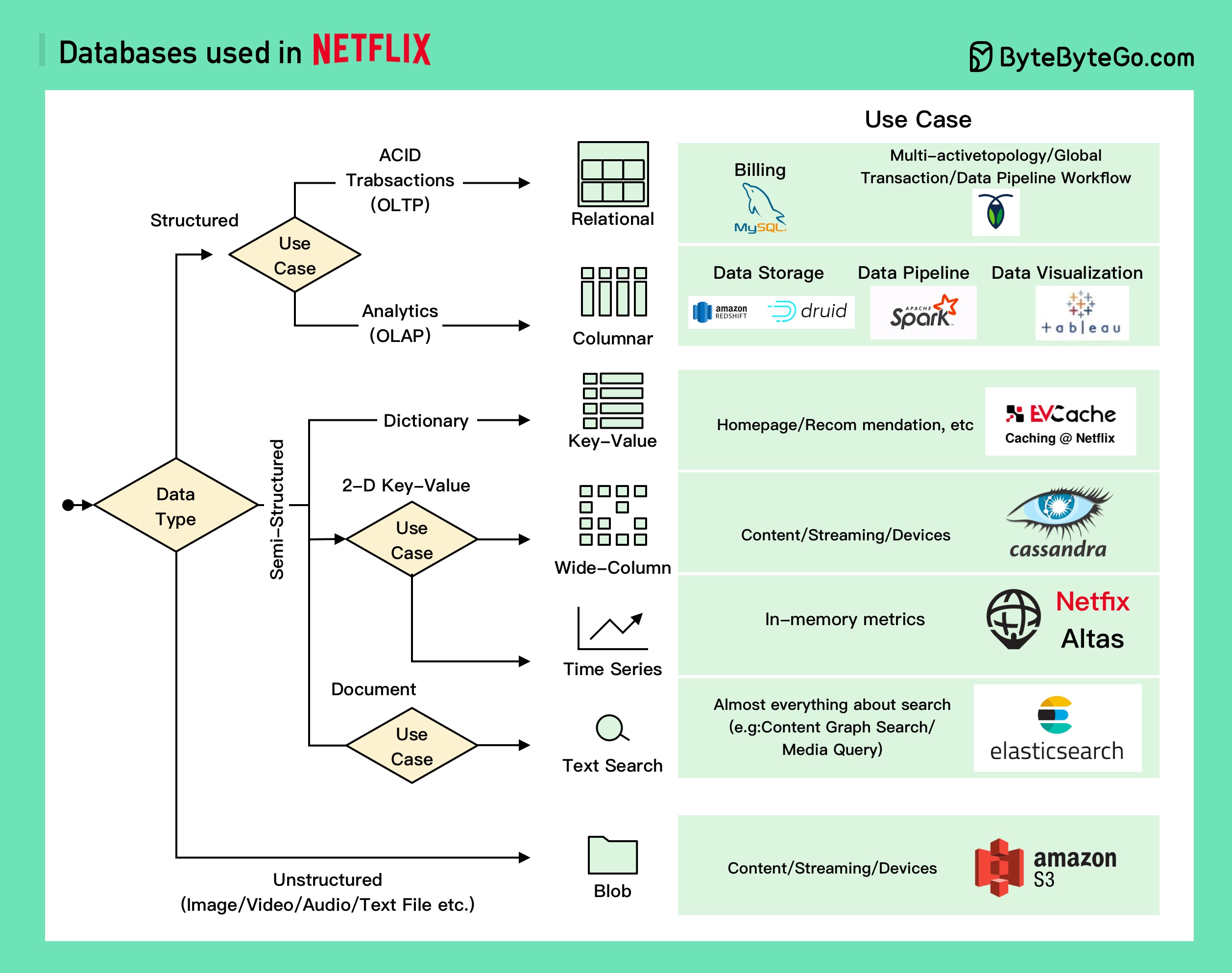

Database Architecture

Netflix uses a polyglot persistence approach, selecting the right database for each use case:Relational Databases

Relational Databases

MySQL - Used for:

- Billing transactions

- Subscriptions

- Tax information

- Revenue tracking

- Multi-region active-active architecture

- Global transactions

- Data pipeline workflows

Columnar Databases

Columnar Databases

Used primarily for analytics:

- Redshift: Structured data warehouse

- Druid: Real-time analytics

- Spark: Data pipeline processing

- Tableau: Data visualization

Key-Value Store

Key-Value Store

EVCache (built on Memcached):

- Used for 10+ years across most services

- Caches Netflix homepage

- Stores personal recommendations

- Critical for low-latency access

Wide-Column Store

Wide-Column Store

Cassandra - The default choice at Netflix:

- Video and actor information

- User data

- Device information

- Viewing history

- Highly scalable and available

Time-Series Database

Time-Series Database

Atlas (open-source, in-memory):

- Built by Netflix

- Metrics storage and aggregation

- Real-time operational insights

Object Storage

Object Storage

Amazon S3 - Stores almost everything:

- Image files

- Video content

- Metrics

- Log files

- Big data storage

- Data lake architecture

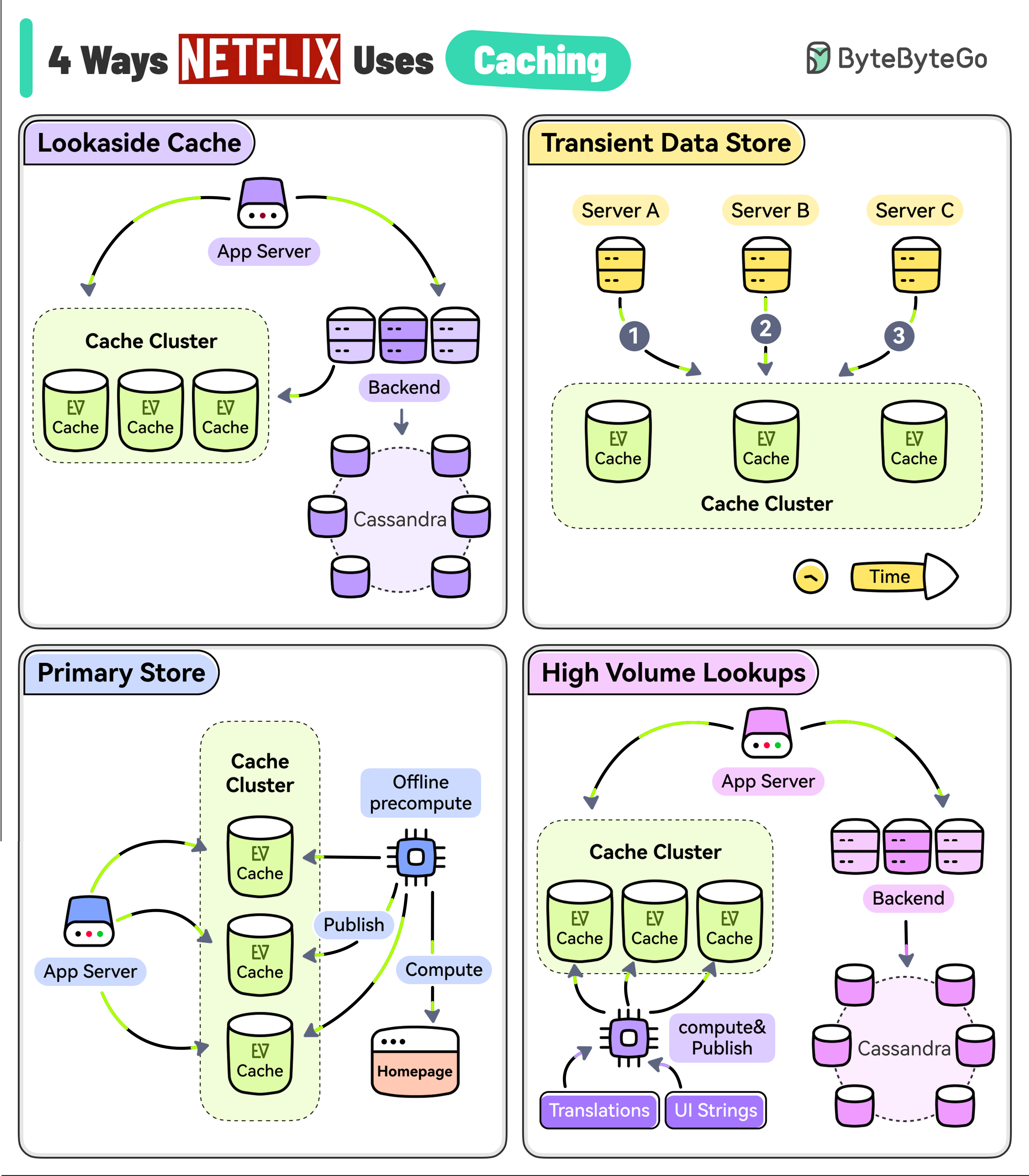

Caching Strategy

Key Insight: Netflix’s goal is to keep users streaming. With a typical attention span of just 90 seconds, reducing latency through caching is critical to maintaining engagement.

1. Lookaside Cache

The most common caching pattern:- Application requests data from EVCache client

- If cache miss, fetch from backend service and Cassandra

- Store result in cache for future requests

- Return data to application

2. Transient Data Store

Used for temporary session data:- Playback session information

- One service starts the session

- Another updates it during playback

- Final service closes the session

3. Primary Store

EVCache as the source of truth:- Netflix runs large-scale pre-compute systems nightly

- Computes personalized homepage for every user profile

- Based on watch history and recommendations

- Written directly to EVCache

- Online services read from cache to build homepage

4. High Volume Data

For data requiring:- High access volume

- High availability

- Low latency

- Asynchronous process computes and publishes to EVCache

- Applications read with minimal latency

Video Delivery

Storage and Distribution

Origin Storage

Amazon S3:

- Master copy of all content

- Multiple regions for redundancy

- Source for CDN distribution

Content Delivery

Open Connect CDN:

- Netflix’s custom-built CDN

- Servers placed in ISP data centers

- Reduces internet transit costs

- Improves streaming quality

How Video Streaming Works

- Content Encoding: Videos encoded in multiple bitrates and resolutions

- Distribution: Content pushed to Open Connect edge servers worldwide

- Client Request: User selects content to watch

- Server Selection: Nearest/optimal edge server selected

- Adaptive Streaming: Bitrate adjusts based on network conditions

Messaging and Streaming

Netflix employs event-driven architecture for real-time data processing:- Apache Kafka: Message broker for event streaming

- Apache Flink: Stream processing for real-time analytics

- Use Cases:

- User activity tracking

- Real-time recommendations

- Monitoring and alerting

- A/B testing data

CI/CD Pipeline

Netflix has one of the most mature CI/CD practices in the industry:Development Tools

- JIRA: Project planning and tracking

- Confluence: Documentation and collaboration

- Git: Version control

Build and Test

- Jenkins: Continuous integration

- Gradle: Build automation

- Spinnaker: Multi-cloud continuous delivery (open-sourced by Netflix)

Deployment

- Spinnaker: Orchestrates deployments across regions

- Canary Deployments: Test changes with small percentage of traffic

- Red/Black Deployments: Quick rollback capability

Monitoring and Reliability

- Atlas: Time-series metrics

- PagerDuty: On-call management

- Chaos Monkey: Randomly terminates instances to test resilience

- Simian Army: Suite of chaos engineering tools

Netflix pioneered Chaos Engineering - the practice of intentionally introducing failures to test system resilience. Their Chaos Monkey randomly terminates production instances to ensure the system can handle failures gracefully.

Key Architecture Decisions

1. Cloud-Native from the Start

- Netflix migrated to AWS in 2008-2016

- One of the largest AWS deployments

- Multi-region for disaster recovery

- Auto-scaling based on demand

2. Polyglot Persistence

- Different databases for different use cases

- No single database solution

- Optimized for specific access patterns

3. Service-Oriented Architecture

- Microservices for business capabilities

- Teams own services end-to-end

- Independent deployment and scaling

4. API Gateway Evolution

- From monolith → direct access → gateway → federated gateway

- GraphQL for flexible data fetching

- Reduced network overhead

5. Heavy Investment in Observability

- Custom tooling (Atlas, etc.)

- Distributed tracing

- Real-time anomaly detection

Lessons Learned

Build for Failure

Assume everything will fail. Netflix’s architecture is designed with redundancy and graceful degradation at every level.

Right Tool for the Job

Netflix uses multiple databases and technologies, each selected for specific use cases rather than standardizing on one solution.

Gateway Evolution

Start simple (monolith), evolve to microservices when needed, and adopt aggregation layers (GraphQL) as complexity grows.

Cache Everything

With 90-second attention spans, latency kills engagement. Aggressive caching at multiple layers is essential.

Scale Statistics

- 200+ million subscribers worldwide

- 190+ countries served

- Billions of hours streamed monthly

- Thousands of microservices

- Hundreds of thousands of streaming sessions concurrently

- Petabytes of video content