Overview

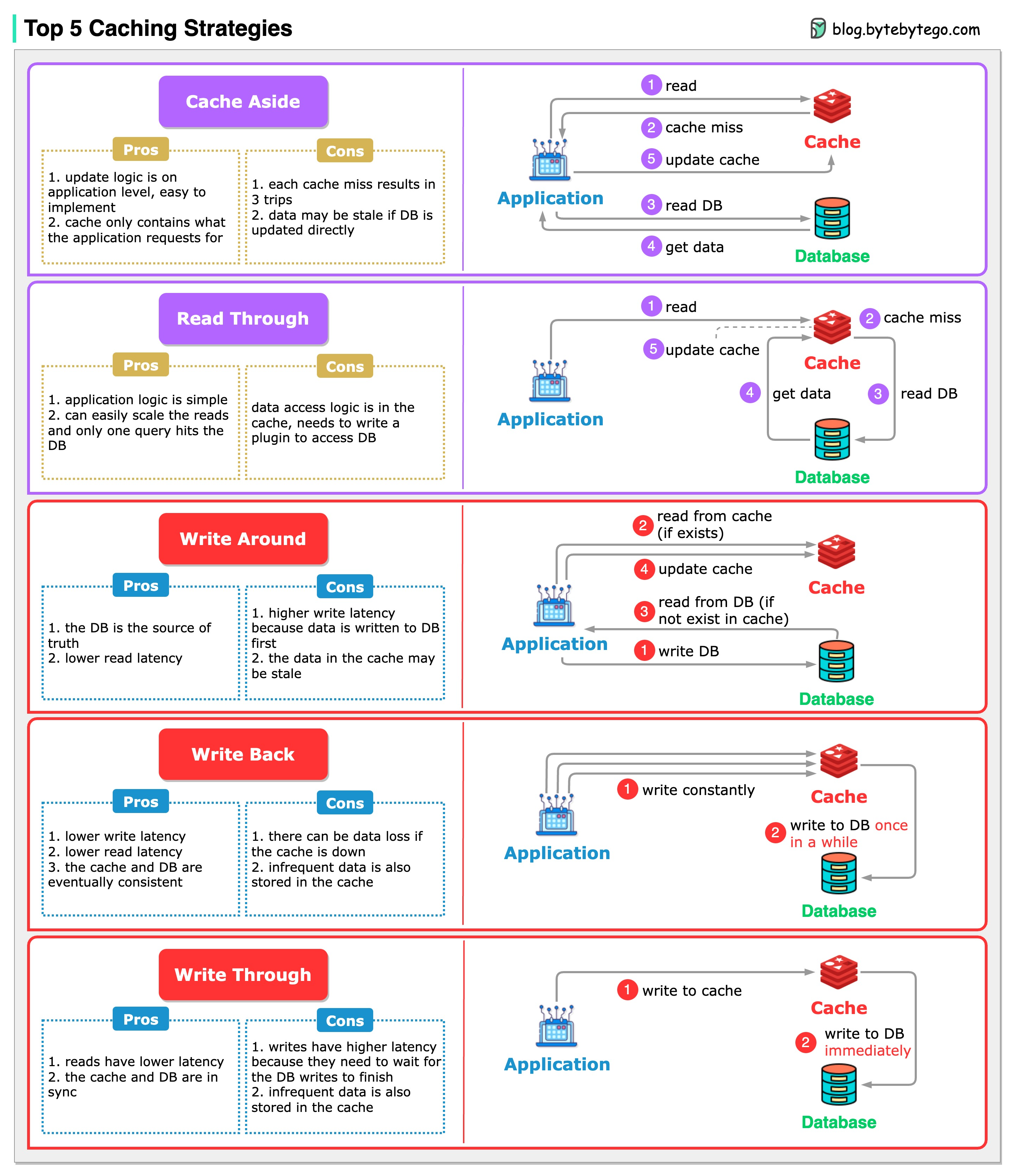

When we introduce a cache into the architecture, synchronization between the cache and the database becomes inevitable. Choosing the right caching strategy is crucial for maintaining data consistency while optimizing performance.

Caching strategies are often used in combination. For example, write-around is frequently paired with cache-aside to ensure cache freshness.

Read Strategies

Read strategies determine how your application retrieves data from the cache and database.Cache-Aside (Lazy Loading)

How Cache-Aside Works

How Cache-Aside Works

The application is responsible for managing the cache:

- Application checks cache for data

- If cache hit: Return data from cache

- If cache miss:

- Query database

- Write data to cache

- Return data to application

Pros

- Cache only what you need

- Resilient to cache failures

- Simple to implement

- Works with any database

Cons

- Initial request penalty (cache miss)

- Potential for stale data

- Application manages cache logic

- Thundering herd problem

- Read-heavy workloads

- Data that’s accessed unpredictably

- When you want full control over caching logic

Read-Through

How Read-Through Works

How Read-Through Works

The cache sits between the application and database, handling all data retrieval:

- Application requests data from cache

- If cache hit: Cache returns data

- If cache miss:

- Cache automatically queries database

- Cache stores the data

- Cache returns data to application

Pros

- Simplified application code

- Consistent caching logic

- Cache manages data loading

- Always returns data if available

Cons

- Requires cache library support

- Initial request penalty

- Tight coupling to cache

- Less flexibility

- Applications with consistent read patterns

- When you want to abstract cache logic

- Microservices with shared cache infrastructure

Write Strategies

Write strategies determine how updates are synchronized between cache and database.Write-Through

How Write-Through Works

How Write-Through Works

Data is written to both cache and database synchronously:

- Application writes data to cache

- Cache immediately writes to database

- Both operations complete before returning success

- Read requests always hit fresh data

Pros

- Cache always consistent

- No stale data

- Simple consistency model

- Data durability

Cons

- Higher write latency

- Wasted cache space (infrequently read data)

- Database and cache must both succeed

- More write load

- Applications requiring strong consistency

- Critical data that can’t be lost

- Read-after-write consistency needed

Write-Around

How Write-Around Works

How Write-Around Works

Writes bypass the cache and go directly to the database:

- Application writes directly to database

- Cache is not updated

- On next read, cache miss occurs

- Data is loaded into cache (cache-aside pattern)

Pros

- No cache pollution from write-once data

- Faster write operations

- Cache stores only read data

- Simple to implement

Cons

- Cache miss on first read after write

- Temporary inconsistency

- Read-after-write penalty

- Need cache invalidation

- Write-heavy workloads

- Data that’s written but rarely read

- Applications that can tolerate eventual consistency

Write-Back (Write-Behind)

How Write-Back Works

How Write-Back Works

Writes are batched and asynchronously written to the database:

- Application writes to cache

- Write acknowledged immediately

- Cache asynchronously writes to database (batched or delayed)

- Database eventually consistent

Pros

- Very fast writes

- Reduced database load

- Batch optimizations possible

- Better throughput

Cons

- Risk of data loss (cache failure)

- Complex implementation

- Eventual consistency

- Requires persistence mechanism

- High-throughput write systems

- Applications that can tolerate some data loss

- Time-series data or logging

- Analytics and metrics collection

Strategy Comparison

- Performance

- Use Cases

- Complexity

| Strategy | Read Latency | Write Latency | Consistency |

|---|---|---|---|

| Cache-Aside | Low (hit) / High (miss) | Low | Eventual |

| Read-Through | Low (hit) / High (miss) | N/A | Eventual |

| Write-Through | Low | High | Strong |

| Write-Around | Variable | Low | Eventual |

| Write-Back | Low | Very Low | Eventual |

Combined Strategies

Real-world systems often combine multiple strategies for optimal performance.

Cache-Aside + Write-Around

- Fast writes (no cache update)

- Efficient cache usage (only cached when read)

- Simple consistency model

Read-Through + Write-Through

- Consistent reads and writes

- Abstracted caching logic

- Strong consistency guarantees

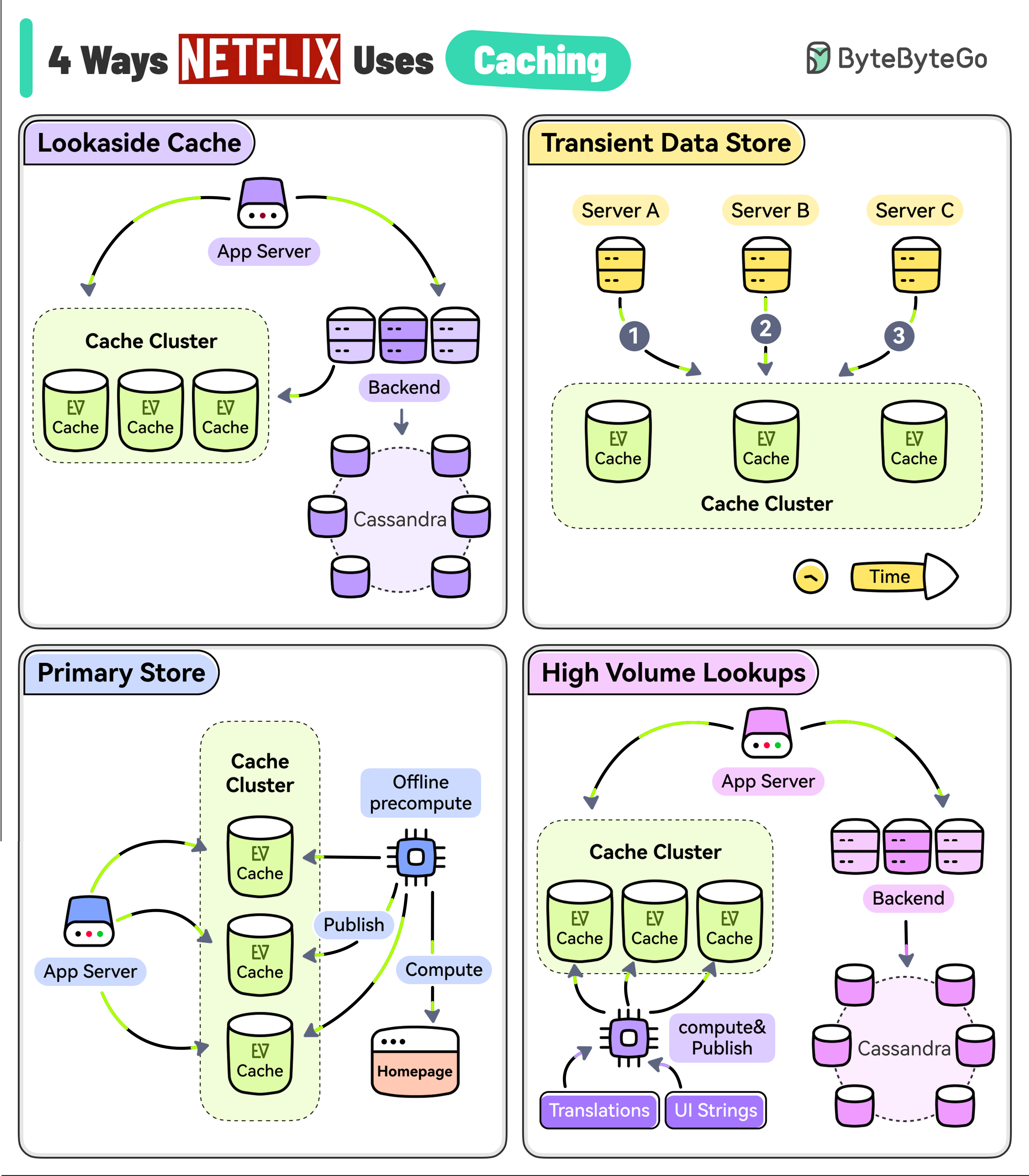

Netflix Caching Example

Netflix uses EVCache (a distributed key-value store) in multiple ways:

Netflix uses EVCache (a distributed key-value store) in multiple ways:

Lookaside Cache

Lookaside Cache

Pattern: Cache-AsideApplication tries EVCache first, falls back to Cassandra on miss, then updates cache for future requests.

Transient Data Store

Transient Data Store

Pattern: Write-ThroughPlayback session data written to cache with eventual persistence, ensuring session continuity across services.

Primary Store

Primary Store

Pattern: Write-BackPre-computed homepage data written in batch overnight, read by online services with high availability.

High Volume Data

High Volume Data

Pattern: Read-ThroughUI strings and translations computed asynchronously and published to EVCache for low-latency reads.

Cache Invalidation

Strategies for keeping cache fresh:- TTL-Based

- Event-Based

- Version-Based

Best Practices

Choose the right strategy

Match strategy to your workload:

- Read-heavy: Cache-Aside or Read-Through

- Write-heavy: Write-Around or Write-Back

- Consistency-critical: Write-Through

Next Steps

Redis Caching

Deep dive into Redis implementation

Cache Eviction

Learn about eviction policies

CDN Caching

Explore edge caching strategies